Facebook Pulls Back The VR Video Push

For the uninitiated, 360 video is filmed in all directions at once and lets the viewer experience the action in front, side to side or from behind. You don't get depth perception and the ability to move around as you do in true virtual reality, but it's a start.

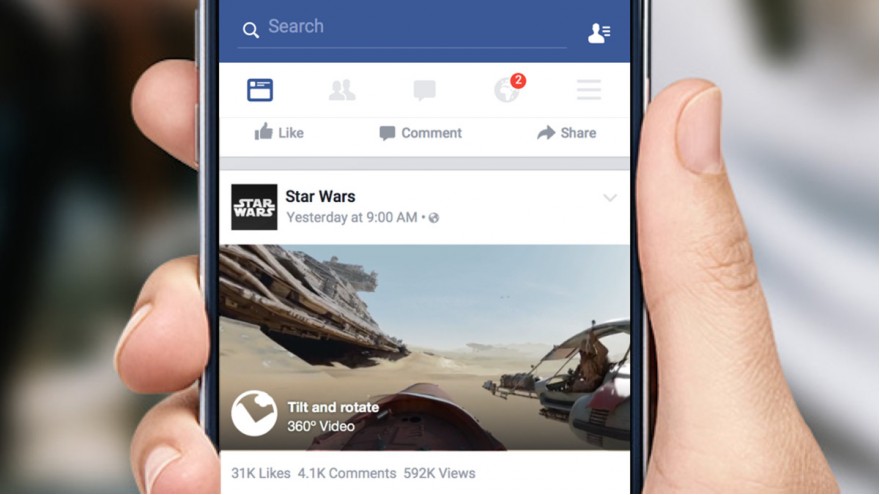

Facebook is going big on video, including 360-degree VR-lite video. Now, in a new blog post, the company has explained a little more about what's going on behind the scenes with its new support for immersive video clips.

It's also the most accessible form of VR right now: If you don't have a headset, you can watch 360 video in your web browser instead, clicking and dragging with your mouse rather than moving your head. It also works well on the latest smartphones, which change the point of view as the handset moves around.

That effectively means all of Facebook's one-and-a-half-billion users can get started with this basic form of VR right away. (YouTube has a dedicated channel for the format too.) It's no surprise that the owner of Oculus has introduced these kind of clips to its News Feed.

But even if 360 movies are a step below true VR, they still require an awful lot of processing and engineering to produce a smooth and stable end-user experience, as Facebook details in its new blog post.

The Challenges Of 360 Video

Just serving up straight online video is quite a challenge, even though the end-user experience might seem like nothing special to you and me—but trying to put a spherical format on a 2D screen brings with it a whole new set of difficulties to contend with.

It's like dealing with a massive map of the globe—a 3D image converted to 2D—but with mammoth file sizes and moving pictures along the way too. Facebook engineers Evgeny Kuzyakov and David Pio describe it as "a generous playground" for coders.

The developers got around the issue by using cube maps, already a mainstay of computer graphics technology. These maps cut out geometry distortion, give equal importance to all faces (so your spherical video looks as smooth as possible) and simplifies some of the processes of projecting the image.

You can get a sense from the images below how a 360 video source gets converted into a cube map.

"In order to implement our transformation from equirectangular layout to cube map, we created a custom video filter that uses multiple-point projection on every equirectangular pixel to calculate the appropriate value for every cube map pixel," write Kuzyakov and Pio. "That's a lot of calculations. As an example, a 10-minute 360 video has 300 billion pixels that have to be mapped and stretched all over the cube."

The top quarter of the equirectangular video (a 3D video squashed to 2D) becomes the top cube face and the lower quarter becomes the bottom face. The middle 50 percent of the clip is split into four cube faces and the faces are then stitched back together—ultimately the process outputs the same information as the source but with 25 percent fewer pixels per frame.

Simply put, for each frame of video, the spherical source is placed inside a cube and then expanded until every pixel is filled in.

Processing Demands, And The Future

Kuzyakov and Pio also offer some details of the technical demands in storing 360 content. Clips can use up 22GB per hour of storage, while true stereoscopic 360 stereo videos (with separate views for each eye) are 44GB per hour. That's a lot of bytes to store and to serve up.

"Part of [decreasing the bitrate] was accomplished in the custom video filter we applied; we also attacked it during the encoding process," the Facebook engineers write. "Chopping the video up, processing it on multiple machines, and stitching it back together without any glitches or loss of audio-visual synchronization is tricky... In order to process large 360 videos in a reasonable length of time, we use distributed encoding to split the encoding process across many machines."

Thanks to Facebook's extensive infrastructure and networked processing power, 360 videos can be routed through a dedicated tier of machines. A custom video filter was created by the company's engineers to take up the CPU and memory intensive workload.

There's still room for improvement—360 videos can't yet be detected automatically when uploaded, for example—but Kuzyakov and Pio say the team has made "great strides" so far. Higher resolutions and true 3D (stereoscopic) videos are on the way in the near future, and "many of the key challenges" have now been overcome. Before too long, 360 videos will be as much a part of the fabric of the web as JPEGs or GIF.

Comments